DaCHS 2.4 is out: Blind discovery, pretty datalink, and more

DaCHS 2.4: automatic ranges (with registry support!), pretty datalink (with vocabulary support!). And then the usual bunch of improvements (hopefully!).

I have released DaCHS 2.4 today, and as usual for stable releases, I would like to have something like a commented changelog here so DaCHS deployers perhaps look forward to upgrading – which would be good, because there are far too many outdated DaCHSes out there.

Among the more notable changes in version 2.4 are:

Blind discovery overhaul. If you've been following my requests to include coverage metadata three years ago, you have probably felt that the way DaCHS started to hack your RDs to include the metadata it had obtained from the data was a bit odd. Well, it was. DaCHS no longer does that when running dachs limits. While you can still do manual overrides, all the statistics gathered by DaCHS is now kept in the database and injected into the DaCHS' internal idea of your RDs at loading time.

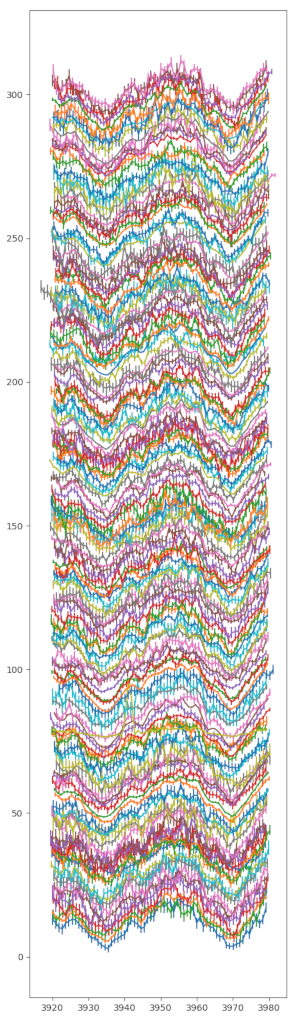

I have not only changed this because the old way really sucked; it was also necessary because I wanted to have per-column metadata routinely, and since in advanced DaCHS there often are no XML literals for columns (because of active tags), there wouldn't be a place to keep information like what a column is minimally, maximally, in median, or as a “2σ range“ within the RD itself. A longer treatment of where this is going is given in the IVOA note Blind Discovery 2: Advanced Column Statistics that Grégory and I have recently uploaded.

For you, it's easy: Just run dachs limits q once you're happy with your data, or perhaps once a month for living data, and leave the rest to DaCHS. A fringe benefit: in browser froms, there are now value ranges of the various numeric constraints as placeholders (that's the screenshot on the left in the title picture).

There is a slight downside: As part of this overhaul, DaCHS is now computing the coverage of SIAP and SSAP services based on the footprints of the products as MOCs. While that gives much more precise service footprints, it only works with bleeding-edge pgsphere as delivered in Debian bullseye – or from our Debian repository. If you want to build this from source, you need to get credativ's pgsphere fork for now.

Generate column elements: If you have tables with many columns, even just lexically entering the <column> elements becomes straining. That is particularly annoying if there already is a halfway machine-readable representation of that data.

To alleviate that, very early in the development of DaCHS, I had the gavo mkrd subcommand that you could feed FITS images or VOTables to get template RDs. For a number of reasons, that never worked well enough to make me like or advertise it, and I eventually ended up writing dachs start instead, which is something I like and advertise for general usage.

However, what that doesn't do is come up with the column declarations. To make good on this, there is now a dachs gencol command that will, from a FITS binary table, a VOTable, or a VizieR-style byte-by-byte description, generate columns with as much metadata as it can fathom. Paste that into the output of dachs start, and, depending on your input format, you should have a quick start on a fairly full-featured data collection (also note there's dachs adm suggestucds for another command that may help quickly generate rich metadata).

This currently doesn't work for products (i.e., tables of spectra, images, and the like); at least for FITS arrays, I suppose turning their non-obvious header cards into columns might save some work. Let's see: your feedback is welcome.

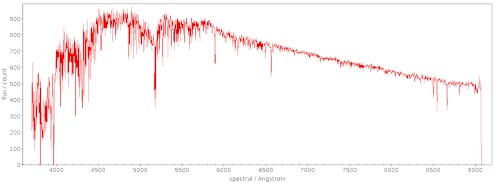

Refurbished Datalink XSLT: Since the dawn of datalink, DaCHS has delivered Datalink documents with XSLT stylesheets in order to have nicely formatted pages rather than wild XML when web browsers chance on datalink documents. I have overhauled the Javascript part of this (which, I have to admit, is what makes it pretty). For one, the spatial cutout now works again, and it's modeless (no clicking “edit“ any more before you can drag cutout vertices). I'm also using the datalink/core vocabulary to furnish link groups with proper titles and descriptions, and to have them sorted in in a proper result tree. I've talked about it at the interop, and I've prepared a showcase of various datalink documents in the Heidelberg data centre.

Update to DaCHS 2.4 and you'll get the same thing for your datalinks.

Non-product datalinks: When writing a datalink service, you have to first come up with a descriptor generator. DaCHS will provide a simple one for you (or perhaps a bit more complex ones for FITS images or spectra) – but all of these assume that whatever the datalink ID parameter references is in DaCHS' product table. It turned out that in many interesting cases – for instance, attaching time series to object catalogues – that is not the case, and then you had to write rather obscure code to keep DaCHS from poking around in the product table.

No longer: There is now the //datalink#fromtable descriptor generator. Just fill in which column contains the identifier and the name of the table containing that column and you're (basically) done. Your descriptor will then have a metadata attribute containing the relevant row – along with everything else DaCHS expects from a datalink descriptor.

gavo_specconv: That's a longer story covered previously on this blog.

Index declaration in views: Saying on which columns a database index exists allows users to write smart queries, and DaCHS uses such information internally when rewriting geometrical expressions from ADQL to whatever is in use in the actual database. Hence, making sure these indexes are properly declared is important. But at the same time it's difficult for views, because postgres doesn't let you have indexes on views (for good reasons). Still, queries against views will (usually) use indexes of their underlying tables, and hence those should be declared in the corresponding metadata.

This is tedious in general. DaCHS now helps you with the //procs#declare-indexes-from stream. Essentially, it will compare the columns in the view with the ones from the source tables and then guess which view columns correspond to indexed columns from the source tables; using that, it adds indexed flags to some view columns.

If all this is too weird for you: Thanks to declare-indexes-from, the index declaration now automatically happens in the modern way to build SSAP services, the //ssap#view mixin. Hence, chances are you won't even see this particular STREAM but just notice its beneficial consequences.

Sunsetting resources: I've been fiddling off and on with a smart way to pull resources I no longer want to maintain while still leaving a tombstone. I had to re-visit this problem recently because I dropped the Gaia DR1 table from my Heidelberg data centre. So, how do I explain to people why the thing that's been there no longer is?

In general, this is a rather untractable problem; for instance, it's very hard to do something sensible with the TAP_SCHEMA entries or the VOSI tables endpoints for the tables that went away. Pure web pages, on the other hand, can be adorned with helpful info. To enable that, there is now the superseded meta item, which you define in the RD that once held the resources. For Gaia DR1, here's what I used:

<meta name="superseded" format="rst"> We do not publish Gaia DR1 data here any more. If you actually need DR1 data, refer to the full Gaia mirrors, for instance `the one at ARI`_. Otherwise, please use more recent data releases, for instance `eDR3`_. .. _the one at ARI: http://gaia.ari.uni-heidelberg.de .. _eDR3: /browse/gaia/q3 </meta>

Root page template: I slightly streamlined the default root page template, in particular dropping the "i" and "Q" icons for going to the metadata and querying the service. If you have overridden the root template, you may want to see if you want to merge the changes.

As usual, there are many more small repairs and additions, but most of these are either very minor or rather technical. One last thing, though: DaCHS now works with Python 3.8 (3.7 will continue to be supported for a few years at least, earlier 3.x never was), which is going to be the python3 in Debian bullseye. Bullseye itself will only have DaCHS 2.3 (with the Python 3.8 fixes backported), though. Once bullseye has become stable, we will look into putting DaCHS 2.4 into the backports.

![[RSS]](../theme/image/rss.png)