GAVO at the AG-Tagung in Köln

As every year, GAVO participates in the fall meeting of the Astronomische Gesellschaft (AG), the association of astronomers working in Germany. This year, the meeting is hosted by the Universität zu Köln (a.k.a. University of Cologne), and I want to start with thanking them and the AG staff for placing our traditional booth smack next to a coffee break table. I anticipate with glee our opportunities to run our pitches on how much everyone is missing out if they're not doing VO while people are queueing up for coffee. Excellent.

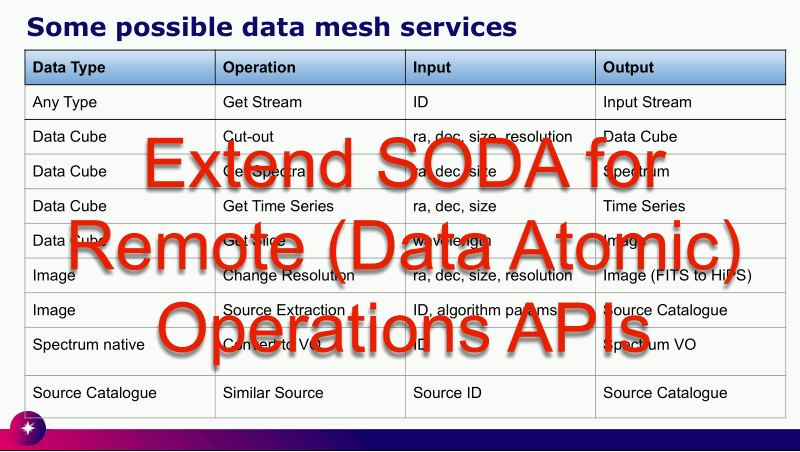

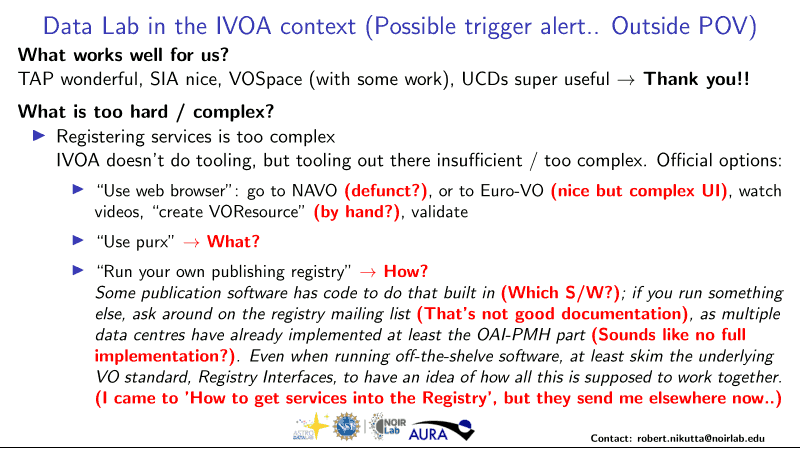

As every year, we are co-conveners for a splinter meeting on e-science the virtual observatory, where I will be giving a talk on global dataset discovery (you heard it here first; lecture notes for the talk) late on Thursday afternoon.

And as every year, there is a puzzler, a little problem rather easily solvable using VO tools; I was delighted to see people apparently already waiting for it when I handed out the problem sheet during the welcome reception tonight. You are very welcome to try your hand on it, but you only get to enter our raffle if you are on site. This year, the prize is a towel (of course) featuring a great image from ESA's Mars Express mission, where Phobos floats in front of Mars' limb:

I will update this post with the hints we are going to give out during the coffee breaks tomorrow and on Wednesday. And I will post our solution here late on Thursday.

At our booth, you will also find various propaganda material, mostly covering matters I have mentioned here before; for posteriority and remoteriority, let me link to PDFs of the flyers/posters I have made for this meeting (with re-usabilty in mind). To advertise the new VO lectures, I am asking Have you ever wished there was a proper introduction to using the Virtual Observatory? with lots of cool DOIs and perhaps less-cool QR codes. Another flyer trying to gain street cred with QR codes is the Follow us flyer advertising our Fediverse presence. We also still show a pitch for publishing with us and hand out the inevitable who we are flyer (which, I'll readily admit, has never been an easy sell).

Bonferroni for Open Data?

A lot more feedback than on the QR code-heavy posters I got on a real classic that I have shown at many AG meetings since the 2013 Tübingen meeting: Lame excuses for not publishing data.

A tricky piece of feedback on that was an excuse that may actually be a (marginally) valid criticism of open data in general. You see, in particular in astroparticle physics (where folks are usually particularly uptight with their data), people run elaborate statistics on their results, inspired by the sort of statistics they do in high energy physics (“this is a 5-sigma detection of the Higgs particle”). When you do this kind of thing, you do run into a problem when people run new “tests” against your data because of the way test theory works. If you are actually talking about significance levels, you would have to apply Bonferroni corrections (or worse) when you do new tests on old data.

This is actually at least not untrue. If you do not account for the slight abuse of data and tests of this sort, the usual interpretation of the significance level – more or less the probablity that you will reject a true null hypothesis and thus claim a spurious result – breaks down, and you can no longer claim things like “aw, at my significance level of 0.05, I'll do spurious claims only one out of twenty times tops”.

Is this something people opening their data would need to worry about when they do their original analysis? It seems obvious to me that that's not the case and it would actually be impossible to do, in particular given that there is no way to predict what people will do in the future. But then there are many non-obvious results in statistics going against at least my gut feelings.

Mind you, this definitely does not apply to most astronomical research and data re-use I have seen. But the point did make me wonder whether we may actually need some more elaborate test theory for re-used open data. If you know about anything like that: please do let me know.

Followup (2024-09-10)

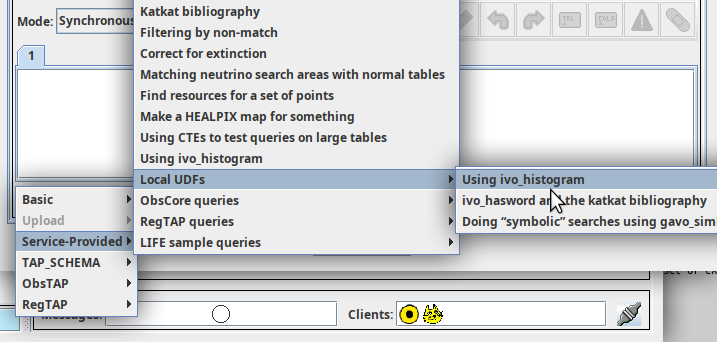

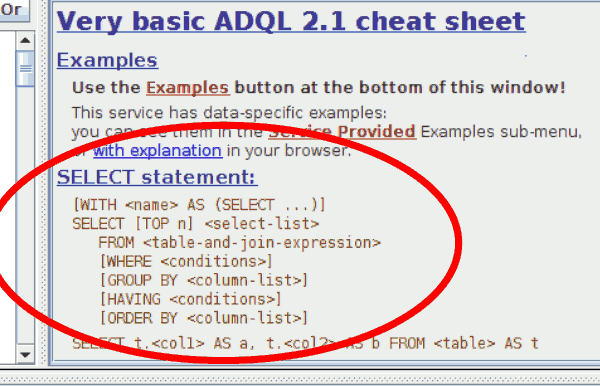

The first hint is out. It's “Try TOPCAT's TAP client to solve this puzzler; you may want to took for 2MASS XSC there.“ Oh, and we noticed that the problem was stated rather awkwardly in the original puzzler, which is why we have issued an erratum. The online version is fixed, it now says “where we define obscure as covered by a circle of four J-magnitude half-light radii around an extended object”.

Followup (2024-09-10)

After our first splinter – with lively discussions on the concept and viability of the “science-ready data” we have always had in mind as the primary sort of thing you would discover in the VO –, I have revealed the second hint: “TOPCAT's Examples button is always a good idea, in particular if you are not too proficient in ADQL. What you would need here is known as a Cone Selection.”

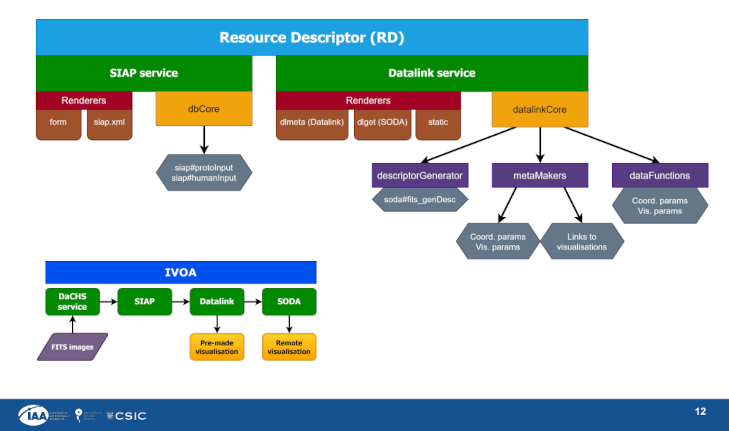

Oh, in case you are curious where the discussion on the science-ready data gyrated to: Well, while the plan for supplying data usable without having to have reduction pipelines in place is a good one. However, there undoubtedly are cases in which transparent provenance and the ability to do one's own re-reductions enable important science. With datalink [I am linking to a 2015 poster on that written by me; don't read that spec just for fun], we have an important ingredient for that. But I give you that in particular the preservation of the software that makes up reduction pipelines is a hard problem. It may even be an impossible problem if “preservation” is supposed to encompass malleability and fixability.

Followup (2024-09-11)

I've given the last two hints today: “To find the column with the J half-light radius, it pays to sort the columns in the Columns tab in TOPCAT by name or, for experts using VizieR's version of the XSC, by UCD.” and “ADQL has aggregate functions, which let you avoid downloading a lot of data when all you need are summary properties. This may not matter with what little data you would transfer here, but still: use server-side SUM.”

Followup (2024-09-12)

I have published the (to me, physically surprising) puzzler solution to https://www.g-vo.org/puzzlerweb/puzzler2024-solution.pdf. In case it matters to you: The towel went to Marburg again. Congratulations to the winner!

Followup (2024-09-13)

On the way home I notice this might be a suitable place to say how I did the QR codes I was joking about above. Basis: The embedding documents are written in LaTeX, and I'm using make to build them. To include a QR code, I am writing something like:

\includegraphics[height=5cm]{vo-qr.png}}

in the LaTeX source, and I am declaring a dependency on that file in the makefile:

fluggi.pdf: fluggi.tex vo-qr.png <and possibly more images>

Of course, this will error out because there is no file vo-qr.png at that point. The plan is to programatically generate it from a file containing the URL (or whatever you want to put into the QR code), named, in this case, vo.url (that is, whatever is in front of -qr.png in the image name). In this case, this has:

https://doi.org/10.21938/avVAxDlGOiu0Byv7NOZCsQ

The automatic image generation then is effected by a pattern rule in the makefile:

%-qr.png: %.url

python qrmake.py $<

And then all it takes is a short script qrmake.py, which based on python3-qrcode:

import sys

import qrcode

with open(sys.argv[1], "rb") as f:

content = f.read().strip()

output_code = qrcode.QRCode(border=0)

output_code.add_data(content)

dest_name = sys.argv[1].replace(".url", "")+"-qr.png"

output_code.make_image().save(dest_name)

![[RSS]](../theme/image/rss.png)