Registry: A Janitor Speaks Out

I sometimes claim the reason I like working on the VO Registry is that I am a librarian at heart. Perhaps there is some truth to that, in that ugly metadata does make me unhappy – but beyond that, it also makes the Virtual Observatory look or even work a good deal worse than it should.

Given that, in this post I'm afraid I will sound more like a grumpy janitor than a wise librarian, but let me still attempt to contribute to better metadata by pointing out a few things to watch out for when writing a resource record. People consuming resource records (i.e., VO-using astronomers) are welcome here, too: when you encounter antipatterns mentioned here, a polite complaint to the service publisher is entirely a good thing.

Note that I am using real metadata found in the registry – in case you recognise some of own records, do not feel reprimanded individually. Most of the problems I discuss here are really common at this point, and thus if I picked your metadata, that was mere bad luck. I actually picked some of my own occasionally (but duly fixed the problem then).

Missing Coverage

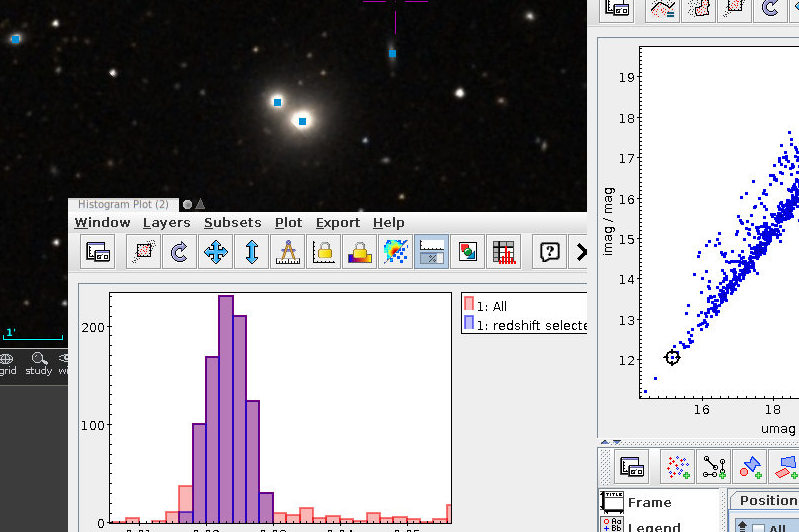

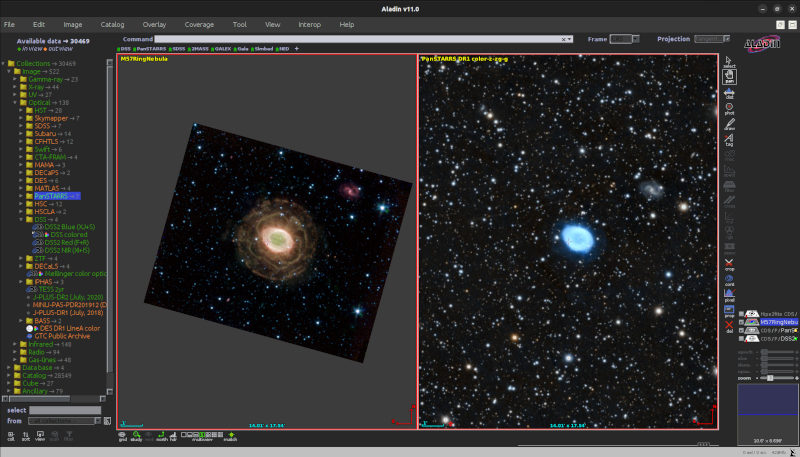

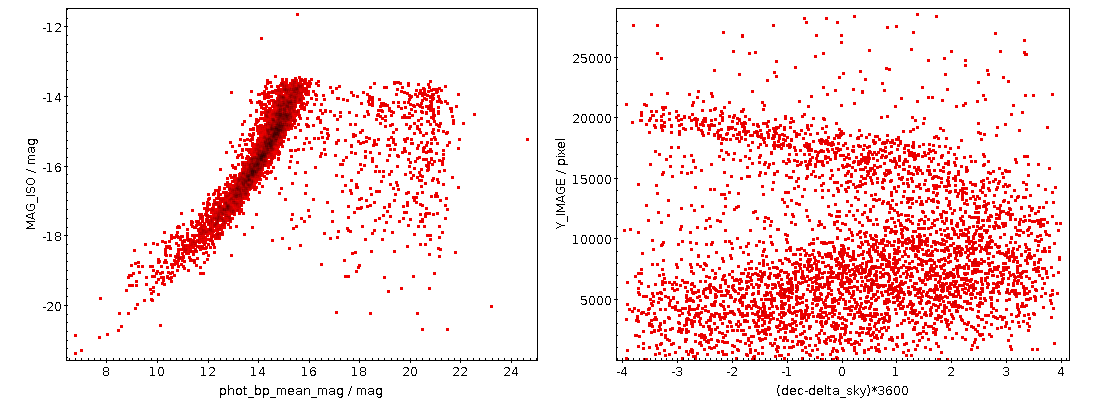

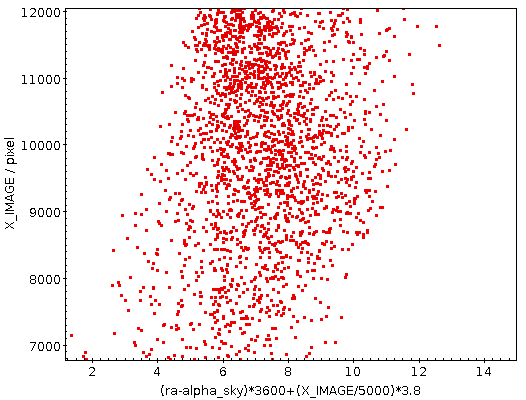

Since VODataService 1.2, you can say what part of the sky, spectrum, and time your resource covers. That is incredibly useful metadata in practice. Spatial coverage, for instance, is used in Aladin like this:

Green means: these services could have data for the patch of sky shown, orange means don't bother with these, and white means: No idea because the resource does not declare its coverage.

Similarly, it would be great if researchers or clients could reliably say:

SELECT * FROM rr.resource JOIN rr.stc_spectral WHERE

1=ivo_interval_overlaps(spectral_start, spectral_end,

ivo_specconv(658, 'nm', 'J'), ivo_specconv(654, 'nm', 'J'))

to find resources having data covering the Hα line on the spectral axis. Currently, that's just 2064 resources, and given that Hα sits smack in the middle of the optical window that's an indication that far too few resources say where they are.

So – add STC coverage to your data today. It's not hard with pymoc or pgsphere and chapter 3.2 of VODataService. DaCHS operators will probably get by just studying the corresponding section of the tutorial.

Broken Author Names

On the ADS, last time I had information on that, about 90% of the queries were by author. In the VO registry, by my unscientific estimate, less than 5% of queries constrain authors. Sure, people look for literature and data in different ways and for different purposes, but an important reason for the difference still is that we don't do a good job giving creator/name (which contains the equvialent of the author name).

The ideal format is to have last name first, then a comma, and then abbreviated initials or full first names, as in von der Heide, J.. Many names in the VO are almost in this format do not have a comma; but the comma makes parsing these names a lot simpler, so please put it in. Of all the forms to write names in, that's most easily constrained without guessing how many first names are where. Remember, there are people out their with names like „Kirsten-Claude Selim de Vaucouleurs-van der Heide Lobos“ (or, for that matter, Uthamadhanapuram Venkatasubbaiyer Swaminatha Iyer), and a computer cannot efficiently decide where the last name starts in first name first order (and conversely, without the comma in last name first order, it has a hard time figuring out where the last name stops). Also, last name first almost always gives a more useful natural sort order.

Realistically, people will have to live with J. von der Heide, too, so author searches in the VO will have to look like LIKE '%von der Heide%' for some years to come, but let's at least try to improve. And whatever you do, don't do any of (in approximate order of severity):

- Dump in half an acknowledgement, e.g., under a cooperative agreement with the NSF on behalf of the Gemini partnership: the National Science Foundation (United States), or, about as bad: provided by S. Snowden from data by Dickey and Lockman – that's useless for author searches but invites lots of false positives

- Dump more than one name into one creator/name element, e.g., Zhuang Z.,Kirby E.N.,Leethochawalit N.,de los Reyes M.A.C. or Voges, W.; Aschenbach, B.; Boller, Th.; (and ~200 more characters) – that's really hard to search and essentially impossible to use for, e.g., author datagraphies

- Include affiliations (the VO can't properly deal with those yet), e.g., Zub M. (The PLANET Collaboration) or a combination of this and the previous: Zhu W. (The Spitzer team) Dominik M.

- Forget citation debris, e.g., et al. MNRAS (in press), or, shockingly common: and Scheck M.; of course, entire citations (WALKER I. Astron. J. 106) are inappropriate, too – all of this will prevent the use of meaningful name constraints

- Give a bibcode: 2014ApJ...787...78M – this likely belongs into content/source

- Have empty author name elements (as, at this moment, 13 records)

- Cheat with effectively empty author names: <NOT GIVEN>, or "We forgot to give credit, please complain"

- Go all uppercase, e.g., ZINNECKER H. – standards-compliant ADQL string comparisons are case-sensitive, and case-folding would require special indexes. Perhaps case-insensitive author matches should be made easier in that van der Waals is probably the same person as Van der Waals, but for now that's not how it works right now. And I don't think that will change any time soon, because if I have learned one thing in my life it is that case insensitivity is almost always evil

- Have just a first name: walter or W.I. or W-J

- Combine author lists from different contributing papers: Wright et al.; Griffith, Wright, Burke, Ekers; Griffith, Wright – if you really need to do something like this, merge the two author lists – and then of course use one name per creator element

In principle, these considerations would apply to contributors, contacts and perhaps publishers, too, but since I don't think people should use these in discovery queries, their format does not matter too much: If they're human-readable, that's enough.

Fragile Contact Info

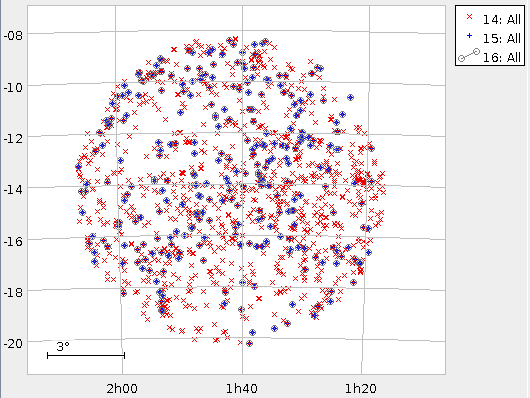

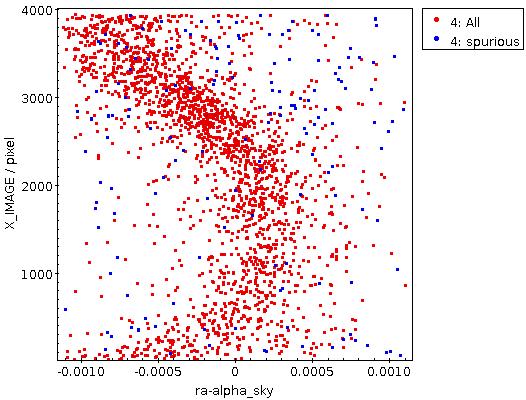

Quite regularly I need to ask people to fix something in their publishing registries, and then it's really useful to have reliable contact information. That's also nice for VO users; pyVO, for instance, has the get_contact method on registry records, and in WIRR, you can pop up contact info on all records:

For that to work, personal addresses in the contact information are really dangerous – it is my experience that these break significantly more often than institutional addresses. So, please avoid things like (I'm making all of these up because there may still be folks around harvesting mail addresses to send spam):

- a.b.miller-parachtnix@gmail.com (well: avoid using gmail.com unconditionally)

- friederike.student@ari.uni-heidelberg.de

Rather, create an alias that you can hand on and that perhaps is even a bit speaking. This could be:

- vo-help@great-telescope.org

- gavo@ari.uni-heidelberg.de

- uni-hd-vo@posteo.de (in case your own institution absolutely loathes the idea of addresses not bound to persons)

Non-machine-readable Subjects

VOResource 1.1 said that subjects are to be taken “from the UAT” (that's the Unified Astronomy Thesaurus), but failed to say what exactly that means. Since last July, this is properly defined: Use fragment identifiers into http://www.ivoa.net/rdf/uat, that is, something like abell-clusters.

Having all subject keywords in a predictable format, with useful metadata, and part of a proper hierarchy enables all kinds of cool stuff, and hence it would be great if we could stomp out the following sorts of mispractice in the VO:

- Multiple things in one subject element: ATLAS DR1, SIAP, Images – have one term per subject element

- Undefined NULL values: NOT PROVIDED, ??? – if you really cannot find a pertinent term, use astronomical-research (or one of the other top-level terms). If nowhere else, that at least helps when your record moves to interdisciplinary search engines

- Random free text: optical lines equivalent width catalog – that's multiple terms rolled into one, and the machine will not know what it means

- Project or instrument names: 6dF Data Release 3 Spectra, COROT N2 – there's the instrument metadata for some uses of that. For the rest, see above on having projects in creator/name.

- Protocol names: TAP – that's what capabilities are for

- Service titles: CADC image/cube HiPS service – that's what the title element is for

- Non-UAT keyword schemes: Galaxy:general – let's not force VO components to learn about multiple keyword systems. If you are missing something from the UAT, tell them about it

Unfulfilling Resource Descriptions

Descriptions of VO resources serve a dual purpose: The should give researches a quick idea of what to expect and not expect of a resource, and they should mention all the important buzzwords for the benefit of full-text searches. Hence, if you only have two words as in:

Survey (LoLSS).

or have something like a title:

Convolution of normalized synthetic stellar spectra.

or use somewhat uncommon abbreviations and technical details that probably will not help much during data discovery:

USET Group form

(what group? Does „form“ really mean „web browser-facing“? If so, that's again better expressed through the capabilities), you should work a bit on your description.

It is usually helpful to start the description with „this service is…“ or something similar. While it's marginally ok to mention terms and conditions like:

When referencing results from this online catalog, please cite <a href="https://iopscience.iop.org/article/10.384…

further down in the description (the proper place for this kind of thing is the rights element, though), don't discuss stuff like this before you have told people what is in the “online catalog” in the first place. Also: registry records are like e-mail in that you shouldn't use HTML anywhere in registry metadata. If you have to include URLs in text for human consumption, just put them in as text.

Talking about markup: You cannot rely on any of that in descriptions. Even basic ASCII art (or, well, tables) will always come out bad:

Only the data from the first catalog that was matched is reported here according to the following priority list. This means for example, if a star was matched with Hipparcos, that information was used while possible other catalog data are not listed here. -------------------------------------------------------- # stars flg catalog -------------------------------------------------------- 53500 0 no catalog match 55549 1 Hipparcos 254 2 Yale Parallax Catalog 1041 3 Finch and Zacharias 2016 (UPM NNNN-NNNN) 1431 4 MEarth parallaxes 402 5 SIMBAD Database (w/parallax) -------------------------------------------------------- 112177 total number stars in catalog -------------------------------------------------------- Not all parallaxes from the...

(of course, that in this case the newlines and longer sequences of blanks have been normalised to single blanks already in the original resource record makes it particularly certain that the table will come out wrong).

And where in titles abbreviations are usually a good thing, in particular when you can expect your target audience too look for the abbreviation rather spelled-out names in discovery queries, in descriptions you have space, and hence you normally should explain MCQA as „Monte Carlo Quality Assessment“ in something like the following:

Herschel sources in Planck fields measured at 350 µm MCQA

Remember: The people who read your descriptions may come from the future (as in: 25 years from now) or at least may be unfamilar with your project's jargon.

Lame Relationships

There are an incredible 136958 relationships in the current VO that have related-to as their relationship type. This is deplorable because the relevant vocabulary, https://www.ivoa.net/rdf/voresource/relationship_type, marks it as deprecated, and that's for a good reason: Just stating “some relationship“ between two resources is rarely useful. Decide what the relationship is and then pick a proper term (or, if that does not exist, prepare a VEP).

Missing Tablesets

Tablesets are a VODataService feature giving metadata on the return table (or, in the case of the flexible TAP services, the queried tables). They are really useful if you look for services returning some sort of physics – and if you are running TAP services, they will one day let me shut down the GloTS service that replicates a good deal of registry functionality for no good reason at all.

So, if you have a catalog service and your registry record ends somewhat like this:

</capability> </ri:Resource>

it is almost certainly missing a tableset (which would normally go after the capabilities; you are probably also missing the sky coverage, though, because that would sit there, too).

Writing basic tablesets is not hard. In fact, if you are running a TAP service, you have a working tableset on your service's tables endpoint. But even without VOSI tables, making a tableset from the VOTable you return is straightforward – with a few encouraging words, I could be talked to write a few lines of Python that do that.

I will readily admit that writing good tablesets is more involved, but what is hard about it you should be doing anyway, because it also will improve the VOTables that you write, and hence the usability of your data all around. So, until the end of this post let me look at some common warts of the column metadata in today's VO.

Deficient Column Descriptions

Column descriptions like ?, ??, or even ??? are surprisingly common. Please don't do that. If you really have no idea what your upstream has put into a column, admit that, aplogise and try to make your upstream explain.

And while RA somewhat works among astronomers, a word or two on the reference system (“IRCS”) and an informal provenance (“from PSF fits”) would certainly be much appreciated by your users and might even come handy in discovery.

Or consider “Age” – this could immediately be improved by revealing just what has aged here and, again, some hint on how the age was estimated (e.g., “obtained from ivo://foo.bar/res” versus “by isochrone fitting”).

But don't overdo it, either: Do not include entire footnotes in descriptions, because that will lead to many false positives in full text searches (not to mention slow down the Registry as a whole if this became common practice). DaCHS operators: you can have footnotes in your RD by using note meta items; cf. Typed Meta Elements in the DaCHS reference.

Near the upper limit of what is appropriate in a column description is perhaps something like this:

The 2.5 percentile of the Log total SFR PDF. This is derived by combining emission line measurements from within the fibre where possible and aperture corrections are done by fitting models ala Gallazzi et al (2005), Salim et al (2007) to the photometry outside the fibre. For those objects where the emission lines within the fibre do not provide an estimate of the SFR, model fits were made to the integrated photometry.

– but at the same time it illustrates how you can provide a lot of information that helps casual users.

The position angles I will turn to in a second give another nice example of why human-readable descriptions are so important: There is no reliable convention of the direction and the baseline of these, so stating something like „north over east“ in a description will avoid a lot of head-scratching.

Column UCDs: Missing, Outdated, or Useless

A very plausible discovery scenario involves UCDs: „give me resources with (some photometry | redshifts | kinematics | dynamics | positions on earth)“. Hence, make sure your columns' metadata has predictable and halfway correct UCDs.

Sure, that's not always straightforward (note, by the way, that there is a reasonably simple process to suggest new UCDs), but there's no excuse for there being 117 columns called pa without any UCD, where pos.posAng will almost certainly fit all of them (though, who knows: 30 of these in addition don't even have a description).

To make sure the UCDs you assign exist, run them through astropy at least once. Do not ignore complaints by astropy; it is actually preferable to have no UCD rather than “??” (which currently a whopping 30342 column sport, in addition to which we have 41 times “???“ and 70 times “????“[1]). Also, resist the temptation to freely invent things, such as the “mjd” UCD I'm seeing on 13 columns. In this particular case, by the way, I give you that saying “this column contains MJDs“ has been a pain in VOTables for a long time, but since version 1.4, TIMESYS lets you do that in a reasonable way.

Oh, let me qualify the “freely invent“ in the last paragraph: It could be[2] that MJD has actually been part of the original UCDs you may still know from cone search (“POS_EQ_RA”); that people have not updated their metadata from these ancient days is also the reason I'm still seeing 13827 columns with an (invalid) UCD of “error“ in column metadata (and 84 with pos_eq_dec).

Unrelatedly (though with an undisputable entertainment value): the longest UCD in the current VO is meta.code;phot.flux.density;arith.ratio;em.ir.15-30um;em.radio.750-1500mhz; unless I and astropy are missing something, it's even syntactically correct.

Bad Units

While I do not see many discovery scenarios that would make good use of units, do not forget to update your units to VOUnits when you touch up your tablesets. This will let software like astropy do the unit calculus for its users, which is a win overall. It cannot do that if you ignore VOUnits and write, say, ABmag/arcsec2 – the AB part you will have to communicate in the description for now, and exponentiation is ** in VOUnits.

Recent versions of the stilts validators (votlint, taplint) will complain about bad units. And you can use stilts interactively to figure out whether you got it right:

$ stilts calc 'vounitStatus("ABmag/arcsec2")'

BAD_SYNTAX

$ stilts calc 'vounitStatus("mag/arcsec**2")'

OK

[In a previous version of this post, I have given a piece of astropy to do unit checking; it turns out that astropy by default is rather forgiving, and you want stilts on your box anyway; why not use it for unit validation? If your stilts says something about “bad expression“ with the command lines above, it's an indication that you should update it.]

And with this somewhat non-registry topic: Go forth and polish your resource records. Or, as a consumer of such metadata, ask the publishers of bad resource metadata to fix it.

| [1] | Remarkably, there are no ????? or even longer sequences of question marks, and even more remarkably, nobody has put in a lonely question mark. If someone versed in cognitive psychology has a plausible interpretation for that fact: can you share it with me? |

| [2] | Since the original UCDs predate my VO involvement and, for all I know, never were properly standardised, I frankly can't say. |

![[RSS]](./theme/image/rss.png)