2019-05-12

Markus Demleitner

About every six month, the people making the standards for the Virtual

Observatory meet to sort out the next things we need to tackle, to show

off what we've done, and to meet each other in person, which sometimes

is what it takes to take some excessive heat out of a debate or two.

We've talked about Interops before. And now it's time for

this (northern) spring's Interop, which is taking place in Paris

(Program).

This time I thought I'd see if there's any chance I can copy the pattern

I'm enjoying at Skyweek now and

then: A live blog, where I'll extend the post as I go. If that's a plan

that can fly remains to be seen, as I'll give seven talks until Friday,

and there's a plethora of side meetings and other things requiring my

attention.

Anyway, the first agenda item is a meeting of the TCG, the Technical

Coordination Group, which is made up

of the chairs and vice-chairs of the IVOA's working groups (I'm in there as the vice chair of the

semantics WG). We'll review how the standards under review progress,

sanction (or perhaps defer) errata, and generally look at issues of

general VO interest.

Update (2019-05-12, 10:50): Oh dang, my VOResource 1.1 Erratum 1

hasn't quite made it. You see, it's about authentication, i.e.,

restricting service access, which, in a federated, interoperating system

is trickier than you would think, and quite a few discussions on that

will happen during this Interop. So, the TCG has just decided to only

consider it passed if nothing happens this week that would kill it. To

give you an idea of other things we've talked about: Obscore 1.1

Erratum 1 and

SODA 1.0 Erratum 1 both try

to fix problems with UCD annoation (i.e., a rough idea what it is) not

directly related to the standards themselves but intended to help when

service results are consumed outside of the standard context, and

RegTAP 1.0 Erratum 1

fixes an example in the standard regulating registry discovery that

didn't properly take into account my old nemesis, case-insensitivity of

IVOA identifiers. So, yay!, at least one of my Errata made the TCG

review.

Update (2019-05-12, 12:15): Yay! After some years of back and forth,

the TCG has finally endorsed my Discovering Data Collections note. This is

another example of the class of text you don't really notice. It's

supposed to let you, for instance, type in a table name into TOPCAT and

then figure out at which TAP service to query it. You say: I can already

do that! I say: Yeah, but only because I'm running a non-standard

service, which I'd like to cease at some point.

Update (2019-05-12, 15:55): The TCG meeting slowly draws to an end.

This second half was, in particular, concerned with reports from Working

and Interest Groups; this is, essentially, an interactive version of the

roadmaps, where the various chairs say what they'd like to do in the six

month following an Interop. The one from after College Park (VO insiders

live by Interops, named by the towns they're in) you could read at 2018

B Roadmap in the IVOA Wiki – but

really, as of next Friday, you'd rather look at what's going be cooked

up here (which will be at 2019 A Roadmap).

Update (2019-05-12, 16:30) It's now Exec, i.e., the governing body

of the VO, consisting of the principal investigators (or, bosses), of

the national VO projects (I'm just sitting in for my boss, really). This

has, for instance, the final say on what gets to be a standard and what

doesn't. This is, of course, a bit more formal than

the hands-on debates going on in the TCG, so I get to look around a bit

in the meeting room. And what a meeting room they have here at Paris

observatory. Behind me there's a copy of Louis XIV's most famous

portrait

(and for a reason: Louis XIV had the main part of the building we're in

built), along the walls around me are the portraits of the former

directors of Paris observatory (among them names all mathematicians or

astronomers know: Laplace, Delaunay, Lalande, the Cassinis, and so on),

and above me, in the meeting room's dome, there's an allegoric image of

a Venus transit that I can't link here lest schools block this important

outreach site. What a pity we'll have to move into a tent when everyone

else comes in tomorrow...

Update (2019-05-13, 9:11) The logistics speech is being given by

Baptiste Cecconi, who's just given the carbon footprint of this meeting

– 155 tons of CO2 for travel alone, or 1.2 tons per person.

That, as he points out, is about what would be sustainable per year.

Well, they're trying to make amends as far as possible. We'll have

vegetarian-only food today (good for me), and locally grown food as far

as possible. Also, the conference freebie is a reusable cup so people

won't produce endless amounts of waste plastic cups. I have to say I'm

impressed.

Update (2019-05-13, 9:43): One important function of these meetings

is that when software authors and users sit together, it's much easier

to fix things. And, first success for me this time around: The LAMOST

services at the data center of the Czech academy of sciences do fast

positional searches now; you'll find them by looking for LAMOST in

TOPCAT's SSAP window, in

Aladin 10, in Splat, or really

whereever clients let you do discovery of spectral services in the VO.

Update (2019-05-13, 10:59): Next up: “Charge to the Working

Groups”. That's when the various working group chairs give lightning

talks on what's going to happen in their sessions and try to pull as

many people as they can. Meanwhile, in the coffee break, I've had the

next little success: With the people involved, we've worked out a good

way to fix a Registry problem briefly described by “two publishing

registries claim the same authority” (it's always nice to pretend I'm in

Star Trek) – indeed, we'll only need a single deletion at a single

point. Given the potential fallout of such a problem, that's very

satisfying.

Update (2019-05-13, 14:07): While the IG/WG chairs presented their

plans,

the Ghost of Le Verrier (or was it just the wind?) occasionally haunted

the tent, which gave off dreadful noises. And after the session, I

quickly ported the build infrastructure for the future EPN-TAP

specification (SVN for nerds;

previously in this blog for the rest of

you) to python 3. Le Verrier was quiet during that time, so I'm sure the

guy who led the way to the discovery of Uranus approved.

Update (2019-05-13, 14:29): Mark SubbaRao from Chicago's Adler

Planetarium is giving a plenary talk (in other places, this might be

called a ``keynote'') on Planetaria and the VO. And he makes the point that there's 150

million people visiting a plenetarium each year, which, he claims, is a

kind of outreach opportunity that no other science has. I'd not bet on

that last statement given all the natural history museums, exploratoria,

maker faires and the like, but still: That the existence of planetaria

says something about the relationship of the public with astronomy is an

insight I just had.

Update (2019-05-13, 15:07): So, you think you just sit back and

enjoy a colourful talk, and then suddenly there's work in there.

Specifially, there's a standard called AVM designed to annotate

astronomical images to show them in the right place on a planetarium

dome (ok, FITS WCS can do that as well) and furnish it with other

metadata useful in outreach and education. As Registry and Semantics

enthusiast, I immediately clicked on the AVM link at the

foot of http://www.data2dome.org and was

greeted by something pretty close to a standard IVOA document header.

Except it declares itself as an “IVOA draft”; such a document category

doesn't really exist. Even if it did, after around 10 years (there are

conflicting date specs in the document) a document shouldn't be a

“draft” any more. If it's survived that long and is still used, it

deserves to be some sort of proper document, I think. So, I took the

liberty of cold-contacting one of the authors. Let's see where that

goes.

Update (2019-05-13, 16:29): We've just learned about the

standardisation process at IPDA

(that's a bit like the IVOA, just for planetary data), and

interestingly, people are voting there on their standards – this is

against the IVOA practice of requiring consensus. Our argument has

always been that a standard only makes sense if all interested parties

adopt it and thus have to at least not veto it. I wonder if these

different approaches have to do with the different demographics: within

the IPDA, there are far fewer players (space agencies, really) with much

clearer imbalances (e.g., between NASA and the space agency of the UAE).

Hm. I couldn't say how these would impact our arguments for requiring

consensus...

Update (2019-05-13, 17:11): Isn't that nice? In the session of the

solar system interest group, Eleonora Alei is just reporting on

her merged catalog of explanets – which is nice in itself, but what's

pleasant for me is to learn she got to make this because of the skills

she learned at the ASTERICS school in Strasbourg last

November. You see, I was one of the tutors there!

Update (2019-05-14, 8:50): Next up is the first Registry session,

with a talk on how to get the information on all our fine VO services

into B2Find, a Registry-like thing for the Eurpean Open Science Cloud as

its highlight. I'll also present my findings

on what we (as the VO) have gotten wrong when we used “capabilities” do

describe things, and also progress on VODataService 1.2;

this latter thing is, as far as users are concerned, mainly about

finally enabling registry searches by space, time, and spectral

coverage.

Update (2019-05-14, 14:11): So, I did run into overtime a bit with

my talks, which mostly is a good sign in Interops, because it indicates

there's discussion, which again indicates interest in the topic at hand.

The rest of the morning I spent trying to work out how we can map the VO

Registry (i.e., the set of metadata records about our services) into

b2find in a way that it's actually useful.

I guess we – that's Claudia from b2find, Theresa as Registry chair, and

me – made good progress on this, perhaps not the least because of the

atmosphere of the meeting: In the sun in the beautiful garden of Paris

observatory. And now: Data Models I.

Update (2019-05-14, 14:51): Whoops – Steve just mentions in

his talk on the Planetary Data System that there's ISO 14721, a reference

model for an Open Archival Information System. Since I run such an

archive, I'm a bit embarrassed to admit I've never heard of that

standard. The question, of course, being if this has the same

relationship to actually running an Archive as ISO 9001 has to “quality”

(Scott Adams once famously said something to the effect of: if you've

not worked with ISO 9001, you probably don't know what it is. If you

have worked with ISO 9001, you certainly don't know what it is).

Update (2019-05-15, 9:30): I've already given my first talk today:

TIMESYS and TOPOCENTER,

on a quick way to deal with the problem of adjusting for light travel

times when people have not reduced the times they give to one of the

standard reference positions. There's more things close to my heart in

this session: MOCs

in Space and Time,

which might become relevant for the Registry [up-update: and, wow, of

quick searches against planetary or asteroid orbits. Gasp]; you see,

MOCs are rather compact representations of (so far only spatial)

coverages, and the space MOCs are already in use for the Registry in the

rr.stc_spatial table on the TAP service at http://dc.g-vo.org/tap. The

temporal part of STC-based discovery is just intervals at this

point, which probably is good enough – but who knows? And I'm also

curious about Dave's thoughts

on the registration of VOEvents, which takes up something I've reviewed

ages ago and

that went dormant then – which was somewhat of a pity, because there's

to this day no way to find active VOEvent streams.

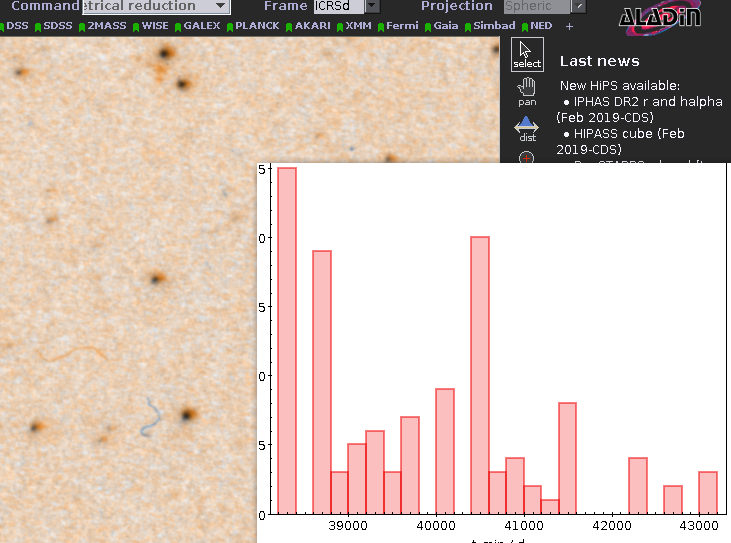

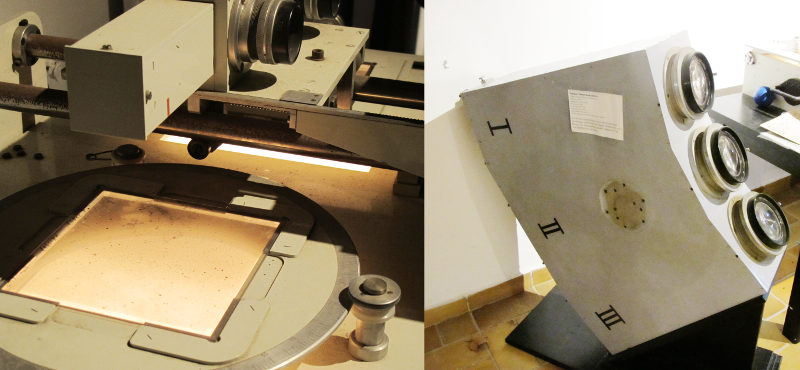

Update (2019-05-15, 11:16): Now I'm in Education (Program), where

I'll talk about

the tutorial I made for the Astroplate workshop I blogged about the other day. Hendrik is just

reporting about

the PyVO course I've wanted to properly

publish for a long time. Pity I'll probably miss Giulia's Virtual

Reality experiences

because I'll have to head over to DAL later...

Update (2019-05-15, 14:18): After another Exec session over lunch I

ran over to a session somewhat flamboyantly called “TAP-fostered

Authentication in the Server-Client scenario“. This is about enabling

running access-controlled services, which I'm not really a fan of; but

then I figure if people can use VO tools to access their proprietary

data, chances are better that that data will eventually be usable from

everyone's VO tools. Data dumped behind custom-written web pages will

much less likely be freed in the end, or so I believe. Anyway, I'm now

in the game of figuring out how to do this, and I'm giving the

(current) Registry perspective.

The main part of the session,

however, will be free discussion, a time-honored and valuable tradition

at Interops.

Update (2019-05-16, 9:00): I'm now in the Theory session,

where people deal with simulated data and such things (rather than, as

you might guess, with the theory of publishing and/or processing data).

The main reason I'm here is that theory was an early adopter of

vocabularies. Due to my new(ish) role in the semantics WG, I'll have to

worry about this, because things changed a bit since they started (I'll

talk about that later today) – and also, some of their vocabularies –

for instance, object types – are of general

interest and shouldn't probably be theory-only. Let's see how far my

charm goes...

Update (2019-05-16, 12:20): I was doing a bit of back-and-forth

between a DAL session (in which, among other things, my colleague Jon

gave a talk on a machine-readable grammar for ADQL

and Dave tells us how ADQL 2.1 goes on (previously on this blog), and a code sprint the astropy folks have

next to the conference, where we've been discussing pyVO's future

(remember pyVO? See the update for yesterday 11:16 if not).

Update (2019-05-16, 14:27): Again, in-session running: I gave a

quick talk on how we'll finally get to do data collection-based

discovery (rather than service-based, as we do now; lecture notes) and

then walked through the garden of Paris observatory to the semantics

session,

where I joined while people were still discussing the age-old problem of

enumerating the observatories, space-probes, and instruments in the

world (an endeavour that, very frankly, scares me a tiny bit because of

its enormous size). After talks on the use of vocabularies in CAOM2

(Pat) and theory (Emeric), I'll then do my first formal action in the

semantics WG: I'll disclose my plans

for specifying how the IVOA should do vocabulary work in the future.

Update (2019-05-16, 17:56): So, the afternoon, between my talks in

Registry II and Semantics, planning for the Semantics roadmap (this is

something where WG chairs say what they're planning until the next

Interop; more on this, I guess, tomorrow), talking with the theory

people about how their vocabularies will better integrate with the wider

VO, and passing on pyVO to core astropy folks, was a bit too busy for

live-blogging. I conclude with a “splinter” on the development of

Datalink. This is pure discussion without a formal talk, which, frankly,

often is the most useful format for things we do at Interops, and

there's almost 20 people here. In contrast to yesterday's after-show

splinter (which was on integration of the VO Registry with b2find), I'm

just a participant here. Phewy.

Update (2019-05-17, 8:52): We're going to start the last act of

Interops, where the working group chairs report

on the progress made during the interop. That, at the time of writing,

only three WGs already have their slide on it shows that that's always a

bit of a real-time affair – understandibly, because the last bargains

and agreements are being worked out as I write. This time around,

though, there's a variation to that theme: The astropy hackathon that

ran in parallel to the Interop will also present its findings, and I

particularly rejoice because they're taking over pyVO development.

That's excellent news because Stefan, who's curated pyVO for the last

couple of years from Heidelberg, has moved on and so pyVO might have

orphaned. That's what I call a happy end.

Update (2019-05-17, 13:01): So, after reviews and a kind good-bye

speech by the Exec chair Mark Allen – which included quite a bit

well-deserved applause for the organisers of the meeting –, the official

part is over. Of course, I still have a last side-meeting: planning for

what we're going to do within ESCAPE, a project linking astronomy with

the European Open Science Cloud. But that's not going to be more than an

hour. Good-bye.

![[RSS]](./theme/image/rss.png)